8 Technical SEO Aspects You Should Know In 2022

Search engines give websites that exhibit specific technical characteristics preferential treatment in search results, and technical SEO is the work you must do to ensure your website does so.

Below is a list of critical aspects to ensure your technical SEO is up to par. By doing so, you can help to ensure that your site's security and structure meet the expectations of search engine algorithms and are rewarded in search results accordingly.

What is technical SEO?

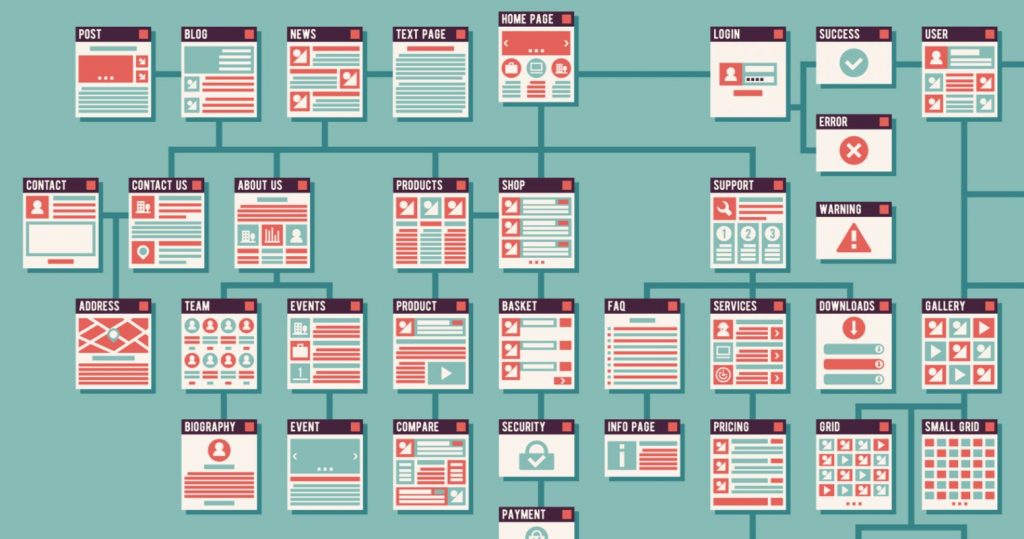

The process of ensuring that a website meets the technical requirements of modern search engines to improve organic rankings is known as technical SEO. Crawling, indexing, rendering, and website architecture are all aspects of technical SEO.

What is the importance of technical SEO?

You can have the best site in the world with the best content. However, if your technical SEO is flawed, you will not rank.

Google and other search engines must be able to find, crawl, render, and index the pages on your website at the most basic level. For your site to be fully optimized for technical SEO, your site's pages must be secure, mobile optimized, free of duplicate content, fast-loading... and a thousand other factors.

That is not to say that your technical SEO must be flawless to rank. However, the easier you make it for Google to access your content, the better your chances of ranking.

What are the technical SEO aspects when optimizing a website?

A technically sound website is fast for users and easy for search engine robots to crawl. A proper technical setup aids search engines in determining the purpose of a site. It also avoids confusion caused by, say, duplicate content. Furthermore, it does not direct visitors or search engines to dead ends caused by broken links. Here, we'll review some key characteristics of a technically optimized website.

1. It’s fast

Web pages must now load quickly. People are impatient and do not like waiting for a page to load. According to research from 2016, 53% of mobile website visitors will leave if a webpage does not open within three seconds. And the trend isn't going away; according to 2022 research, e-commerce conversion rates drop by about 0.3% for every extra second it takes for a page to load. If your website is slow, people will become frustrated and move on to another website, and you will lose all of that traffic.

Google understands that slow web pages provide a subpar user experience. As a result, they prefer web pages that load quickly. As a result, a slow web page ranks lower in search results than its faster counterpart, resulting in even less traffic. Page experience has been a Google ranking factor since 2021. As a result, having pages that load quickly is more important than ever.

2. It’s crawlable for search engines

Robots are used by search engines to crawl or spider your website. Robots use links to find content on your website. A good internal linking structure will ensure that they understand the essential content on your site. However, there are additional methods for guiding robots.

The robots.txt file

The robots.txt file on your site can be used to direct robots. It's a potent tool that should be handled with care. A minor error could prevent robots from crawling (essential parts of) your site. In the robots.txt file, people may unintentionally block their site's CSS and JS files. These files contain code that instructs browsers on how your site should appear. If those files are blocked, search engines cannot determine whether your site is functioning correctly.

The robots meta tag

If you want search engine robots to crawl a page but keep it out of the search results for some reason, use the robots meta tag to tell them. You can also instruct them to crawl a page but not follow the page links using the robots meta tag.

3. It doesn’t have (many) dead links

Landing on a page that doesn't exist may be even more annoying for visitors than a slow page. People will see a 404 error page if they click on a link that leads to a non-existent page on your site.

Furthermore, search engines dislike finding these error pages. They also find more dead links than visitors because they follow every link, even if it's hidden.

To avoid unnecessary dead links, always redirect the URL of a page when deleting or moving it. Ideally, it would be best if you turned it to a page that replaces the old one.

4. It doesn’t confuse search engines with duplicate content

If the same content appears on multiple pages of your site or even on other sites, search engines may become confused. Because if these pages display the same content, they may lower the ranking of all pages with the same content.

Unfortunately, you may be dealing with duplicate content issues without realizing it. Different URLs may display the same content for technical reasons. This makes no difference to a visitor, but it does to a search engine, which will see the same content on a different URL.

This problem, fortunately, has a technical solution. Using the canonical link element, you can specify the original page - or the page you want to rank in search engines.

5. It’s secure

A secure website has been technically optimized. Making your website safe for users to ensure their privacy is a must nowadays. You can do many things to secure your (WordPress) website, and one of the most important is to use HTTPS.

HTTPS ensures that no one can intercept data transmitted between the browser and the website. Google recognizes the value of security and has made HTTPS a ranking signal: secure websites rank higher than unsafe counterparts.

In most browsers, you can quickly check if your website is HTTPS. You'll see a lock on the left side of your browser's search bar if it's safe. If the words "not secure" appear, you (or your developer) have some work to do!

6. It has structured data

Structured data assists search engines in better understanding your website, content, or company. Using structured data, you can tell search engines what kind of product you sell or which recipes you have on your website. Furthermore, it will allow you to provide a wealth of information about those products or recipes.

This information should be provided in a specific format (described on Schema.org) so that search engines can easily find and understand it. It allows them to see your content in context.

Structured data can provide you with more than just a better understanding by search engines. It also qualifies your content for rich, shiny results with stars or details that stand out in search results.

7. It has an XML sitemap

An XML sitemap is a list of all of your website's pages. It acts as a guide for search engines when they visit your site. You can use it to ensure that search engines do not miss any important content on your website. The XML sitemap is frequently organized into posts, pages, tags, or other custom post types, and each page includes the number of images and the last modified date.

A website should not require an XML sitemap. Robots will not need it if it has an internal linking structure that connects all content nicely. However, not all websites are well-structured, and having an XML sitemap won't hurt. As a result, your website should include an XML site map.

8. International websites use hreflang

If your site is aimed at more than one country or multiple countries where the same language is spoken, search engines may require assistance determining which countries or languages you are attempting to reach. If you assist them, they will be able to show people the correct website for their area in the search results.

Hreflang tags assist you in accomplishing this. You can use them to specify the country and language for which each page is intended. This also eliminates the possibility of duplicate content: even if your US and UK sites display the same content, Google will recognise that they are written for different regions.

With the technical SEO and EverRanks information compiled above, hopefully, you will use it wisely to improve the ranking of your website as well as the performance of your business.

Comments

Post a Comment